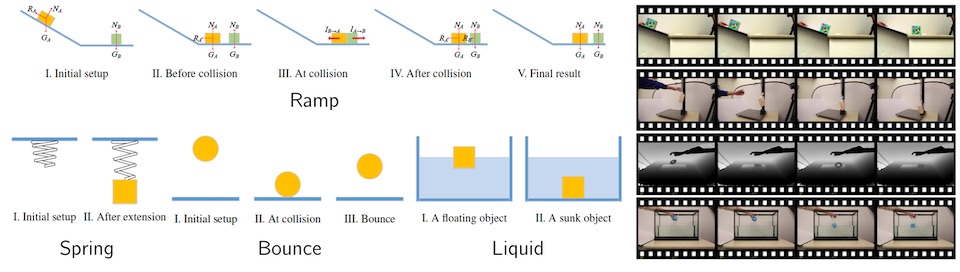

Figure 1: Scenarios and videos in the Physics 101 dataset

Abstract

We study the problem of learning physical properties of objects from unlabeled videos. Humans can learn basic physical laws when they are very young, which suggests that such tasks may be important goals for computational vision systems. We consider various scenarios: objects sliding down an inclined surface and colliding; objects attached to a spring; objects falling onto various surfaces, etc. Many physical properties like mass, density, and coefficient of restitution influence the outcome of these scenarios, and our goal is to recover them automatically. We have collected over 10,000 video clips containing 101 objects of various materials and appearances (shapes, colors, and sizes). Together, they form a dataset, named Physics 101, for studying object-centered physical properties. We propose an unsupervised representation learning model, which explicitly encodes basic physical laws into the structure and use them, with automatically discovered observations from videos, as supervision. Experiments demonstrate that our model can learn physical properties of objects from video. We also illustrate how its generative nature enables solving other tasks such as outcome prediction.